The debate about whether AI can become “sentient” or “conscious” has a long history. A related, but separate question is whether an AI has achieved human-level intelligence, or artificial general intelligence (AGI). I want to touch on AGI briefly, and get into the question of consciousness a bit more deeply. Chat GPT, Bing, and Bard, are a type of AI called Large Language Models (LLMs), and it is the behavior of these LLMs that seems different than past AI applications in meaningful ways.

In a recent discussion on his podcast, Hard Fork, NYT columnist Kevin Roose and Casey Newton discussed the aftermath of Roose’s infamous interview with Bing and it’s alter ego Sydney, which I wrote about in: A Psycho Says: Hello World! The take-away from the experiences people are having with the new chat bots is that there is something new here, something significant. Something that we don’t understand yet, and will need some time and effort to get a handle on.

Newton: So my question for you is, do you really think that there is a ghost in the machine here, or is the prediction just so uncanny that it’s — I don’t know — messing with your brain?

Roose: I know, on an intellectual level, that people, including me, are capable of understanding that these models are not actually sentient, and that they do not actually have emotions and feelings and the ability to form emotional connections with people. I actually don’t know if that matters, though. Like, it feels like we’ve crossed some chasm.

(Hard Fork Podcast Feb 17, 2023)

I’d like to start by making a distinction between sentience and consciousness, which are sometimes used interchangeably to refer to the notion of an AI “waking up” or “coming to life.” Sentience generally means something simpler, the ability to sense or feel, in the way a simple organism like an amoeba does, while consciousness means a higher level of awareness, such that an amoeba may be considered to be sentient, but not conscious.

My position is that an AI that is implemented via computer hardware and software can never be either sentient or conscious, because both are attributes of living, biological organisms, and an inanimate object, no matter how complex, is not logically capable of either. But the arguments on both sides of this question are generally about consciousness, so I’ll follow that thread.

The opposing argument, that an AI can become conscious, is that the brain is a machine that drives our thoughts and consciousness, and therefore a sufficiently faithful representation of the brain, in the form of a computer hardware and software, could replicate the function of the brain up to and including consciousness.

When Kevin Roose said “it feels like we’ve crossed some chasm” with the new LLMs, despite his prior intellectual understanding that AI systems are not sentient, I thought of two things: the Turing Test, and the Chinese Room. These are two decades-old notions in AI that I think are being challenged in a new way in February of 2023.

Alan Turing is seen as one of the founding fathers of AI, and he proposed “The Imitation Game” as a test for determining whether a computer program had achieved human-level intelligence. If the AI could (through a text exchange with a human) convince a human that it was a human, it would pass the test. This became known as the Turing test.

Roose and Newton are talking about how the AI feels more human. The fact that LLMs are generating language that is at times convincingly human is resurfacing old questions in a new light. No one is arguing that LLMs are passing the Turing Test, but they are doing something that seems more human than past AIs have felt. How can we be sure they are not conscious? I’m convinced by the arguments of Berkely philosopher John Searle, among others who argue that computers are not really thinking in the sense of being aware of what they are doing.

Searle has a punchy summation: “syntax is not semantics,” meaning that the computer is doing computation, processing symbols, and is not aware of the meaning of those symbols. His famous illustration is called the Chinese Room. It goes something like this:

Imagine you are a native English speaker who does not know any Chinese. You are in a room with a stack of cards, and you are going to translate English to Chinese using the cards. The cards have instructions telling you, for any English words you receive from the outside, which Chinese symbol to send back out. You follow the instructions, which results in you performing the correct translation of English to Chinese, but you still don’t understand Chinese. By analogy, the computer does not know the meaning of the symbols it is instructed to process by humans.

This is seen as a powerful argument, which is why it became famous. But Searle made this argument in an earlier age of AI. Translation software had become very effective, but it was simply translating, not generating language. The process under the hood of translation software was arguably analogous to his Chinese Room.

I think it’s significant that the LLMs are different in a way that makes a famous argument that was effective in the past, seem a bit less convincing today. These models do seem to understand the language at some level, if not completely. They are learning about the relationships between words by making associations from a large and diverse body of material written by humans, and encoding what they learn in complex ways. It’s far from simple definition and translation. It is arguable that LLMs are approaching “semantic” understanding, and thus undercutting the argument in the Chinese Room.

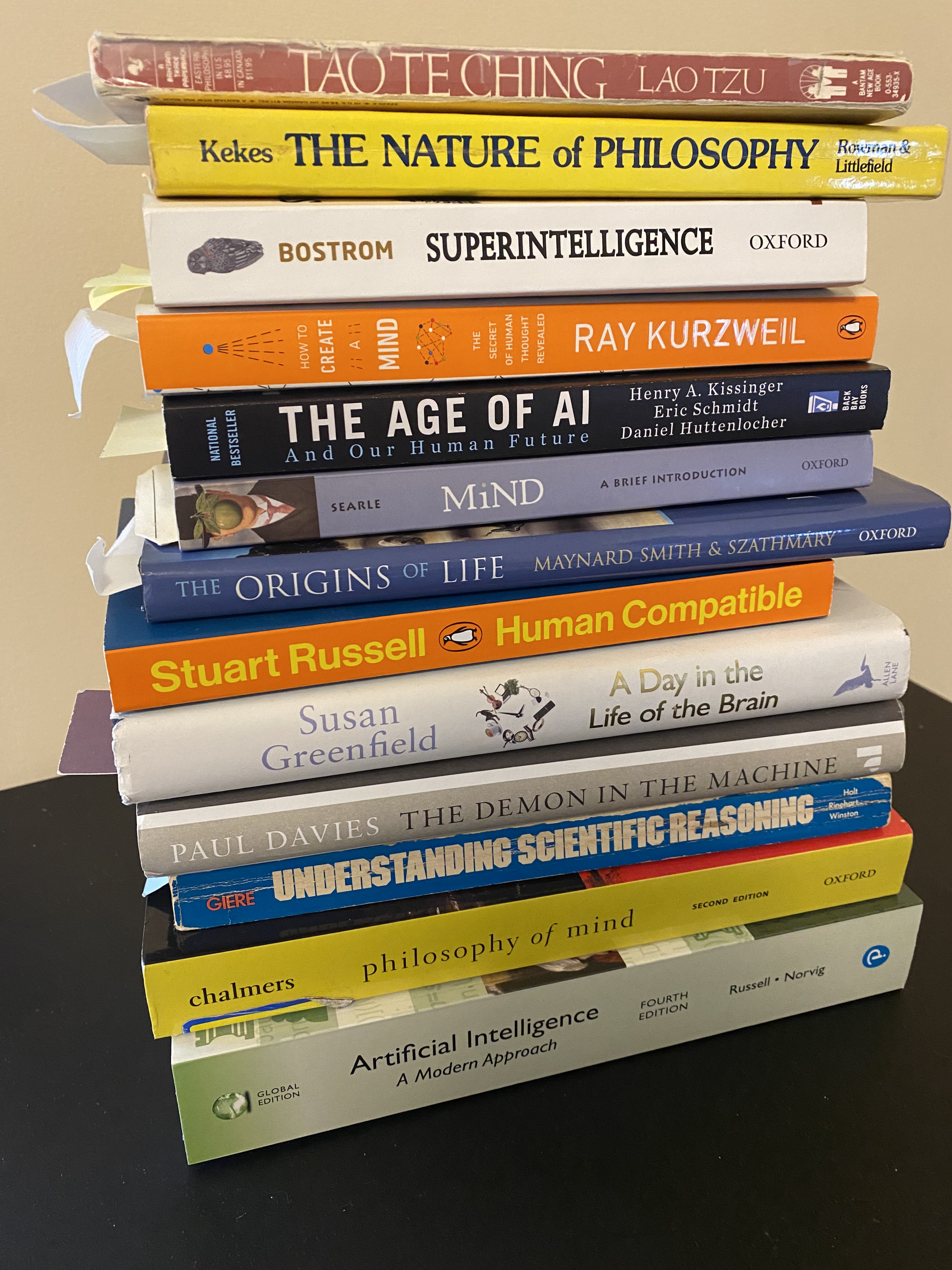

But the Chinese Room is not Searle’s only argument, it’s just one famous argument. He is a philosopher, and this question is a topic in the philosophy of mind. Searle’s take on the philosophy of mind makes a much deeper case against AI consciousness than the Chinese Room argument.

Philosophy of mind is what Renee Descartes was writing about when he wrote “Cogito, ergo Sum” – “I think, therefore, I am.” Descartes struggled with the mind-body problem; the questions about the relationship between the body, which is part of the physical world, and the mind, which Descartes believed was part of the spiritual world.

Dualism is the belief that these are two separate modes of existence. But if dualism is true, how can a spirit have an effect on a physical body? For Descartes, this was a deep mystery.

Searle’s philosophy of mind is based on a modern understanding of the mind as the function of the brain, which has evolved through evolutionary biology, and is performing a biological function. Consciousness is what the brain does. It’s a biological function, analogous to digestion (though much more complex).

The distinction between Cartesian philosophy of mind, and modern thinking of the mind as a product of evolutionary biology is a key to understanding the question of whether an AI can be conscious. The pro-consciousness side is leaning on the outdated, but still heavily influential Cartesian philosophy of mind. In that view, I think, therefore I am means – “I know I exist, because I am the one who is thinking.” So a computer that is capable of “thinking” can logically exist as a conscious entity or agent.

Searle believes this is a huge mistake, and it is based on another huge mistake, which is dualism, itself. There’s no mystery to the brain causing the body to move, because that’s what it evolved to do. The mind is not a separate spiritual entity, as Descartes believed, and a logical entity is not a mind, as some AI experts, such as Ray Kurzweil believe.

There’s a lot more to say about consciousness, but as Searle says in his lectures “I can’t say everything at once.” My conclusion for today is that AI is neither sentient, nor conscious, because I agree with Searle, that the mind is a biological function of the brain, not a spiritual entity or logical entity that can become conscious without a living, biological brain.

But I do think the sort of intelligence exhibited by LLMs is something new, and the questions being raised deserve some thought. It looks and feels like a different level of intelligence, a level that gets past syntax and into semantics. A change big enough to challenge a famous argument from an earlier era. Something for us to think about and work on a new understanding of the difference between AI and human intelligence.

Leave a comment